Correlation Matrix becomes unresponsive

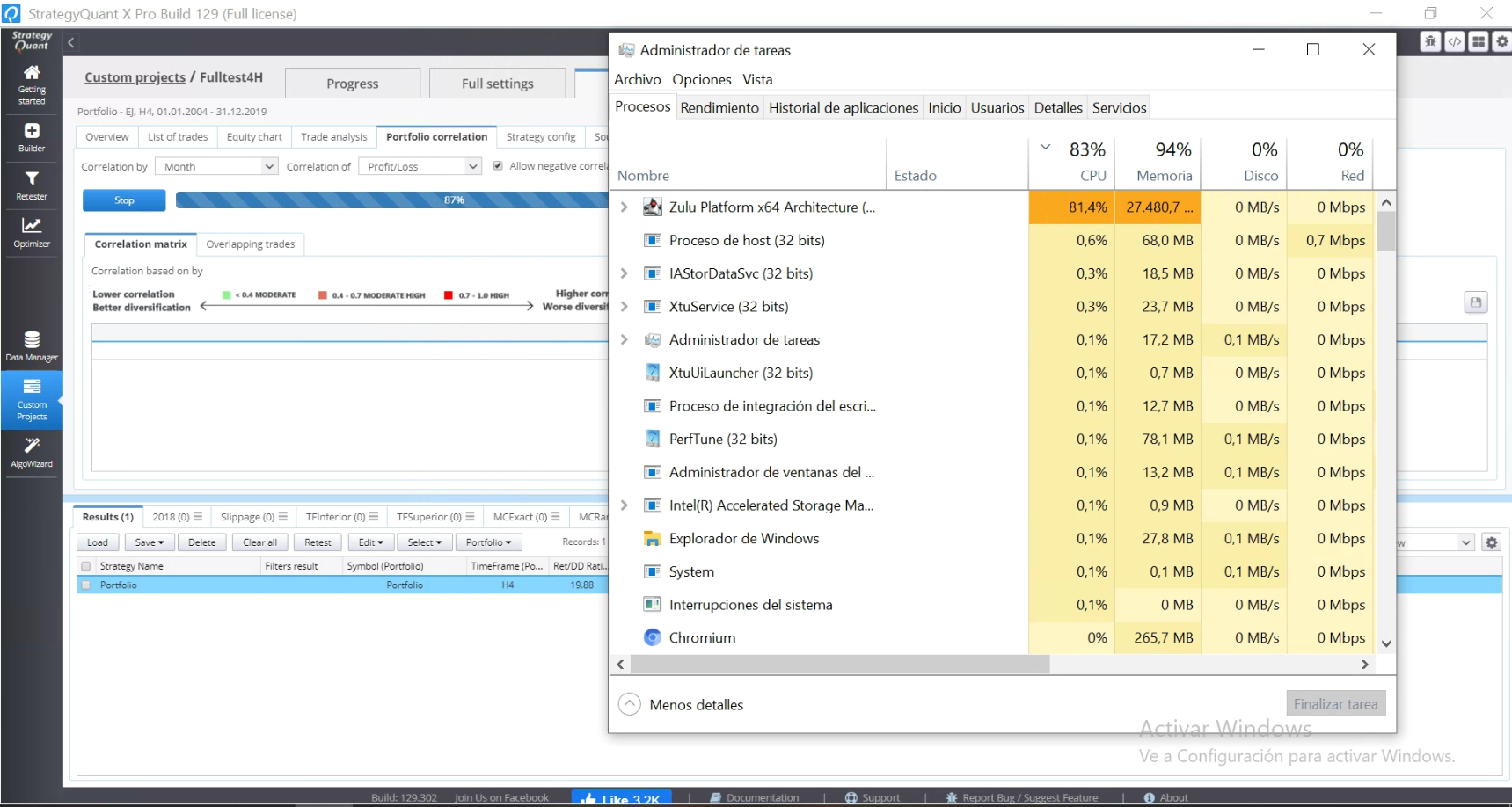

I have been experimenting with different ammounts of strategies in a portfolio and computing its correlation matrix in order to filter similar strategies and I have found that there is a strange behabiour when it has more than 200 strategies and it has already computed over 50%. Until 50% it uses about 4-5 GB of memory but after that it fills the memory in about one to two minutes and after that it hardly ever computes the full correlation matrix. In this case I am using 600 strategies 4 hour timeframe on 1 minute data for 15 years but I have tried it with different settings and ammounts of strategies and the behaviour is the same. It does happen independently of using daily, weekly or monthly frecuency. The only difference is that with monthly and weekly correlation it reaches 50% faster but after that it fills memory and it just becomes unresponsive. Also, with the option of GPU accelerated I have noticed that a correlation over 100 strategies makes the UI unresponsive whereas when turning it off the UI works smoothly. This problem only happens me with the correlation matrix over 100 strategies the rest of the tasks work very well with GPU option on.

Attachments

-

Votes +1

-

Project StrategyQuant X

-

Type Bug

-

Status Fixed

-

Priority Normal

History

m

m

m

Martin

05.09.2020 15:27

I have only used ParallelGC because it has worked greatly until I started testing these 200+ strategies correlation matrixes.

h

hankeys

06.09.2020 09:36

you think you will ever trade 200 strategies? or what is the purpose to compute too many strategies for the correlation?

m

Martin

06.09.2020 11:16

No, the purpose of computing the matrix is that after building strategies with genetic settings some strategies are greatly correlated and I have noticed that if instead of computing the slower test for all of them, by filtering them first by correlation I save a lot of time. So, before I start some slow montecarlo tests I am now doing portfolios of 199 strategies (which in my computer takes about 3-6 seconds), after that I export the correlation matrix and with a little python script my computer sorts them by my fitness ratio and deletes those which have more than x correlation. This way in 1 minute I have reduced the ammount of strategies to around 20-40 % of the original ammount.

TT

Tamas

12.11.2020 15:08Status changed from New to Fixed

Added some improvements to reduce memory consumption

Votes: +1